What is software architecture and why is it important?

Software powers almost every aspect of our everyday life. For businesses aiming to create their own software, understanding the foundational concepts is crucial. One such fundamental concept is software architecture. But what is software architecture?

The software industry itself has long struggled to precisely define software architecture. There is a famous quote by a computer science professor and writer Ralph Johnson that is often used to depict software architecture: “Architecture is about the important stuff…whatever that is.” and in my opinion this is probably the simplest, yet accurate answer one can give. If we want to open this idea a bit more, let’s look into different aspects of software architecture.

The software architect role has responsibilities on a substantial scale and scope that continue to expand. Earlier the role has been dealing with solely technical aspects of software development, like components and patterns, but as architectural styles and software development in general has evolved the role of software architecture has also expanded. Constantly changing and emerging architecture styles such as microservices, event driven architecture and even the use of AI, lead the architect to the need for a wider swath of capabilities. Thus, the answer to “What is software architecture?” is a constantly moving target. Any definition given today will soon be outdated. In software development change is the only constant. New technologies and ways of doing things are emerging on a daily basis. While the world around software architects is constantly changing, we must adapt and make decisions in this shifting environment.

Generally speaking, software architecture is often referred to as the blueprint of the system or as the roadmap for developing a system. In my opinion the answer lies within these definitions, but it is necessary to understand what the blueprint or the roadmap actually contain and why the decisions have been made.

What does software architecture mean?

To get to the bottom of what software architecture actually contains and what should be analyzed when looking at an existing architecture, I like to think about software architecture as a combination of four factors or dimensions: the structure of a system, architecture characteristics, architecture decisions and design principles.

The structure of a system refers to what type of architecture is being used within the software, for example vertical slices or layers, microservices or something else that fits the need in a particular case. Just describing the structure of a system does not, however, give a whole picture on the overall software architecture. Knowledge of architecture characteristics, architecture decisions, and design principles are also required to understand the architecture of a system or what the requirements for the system are.

Architecture characteristics are aspects of software architecture that need careful consideration when planning overall architecture of a system. What are the things that the software must do that are not directly related to domain functionality? These are often described as the non-functional requirements, as they do not directly need knowledge of the functionality of the system yet are needed for the system to operate as required. These contain aspects like availability, scalability, security and fault tolerance.

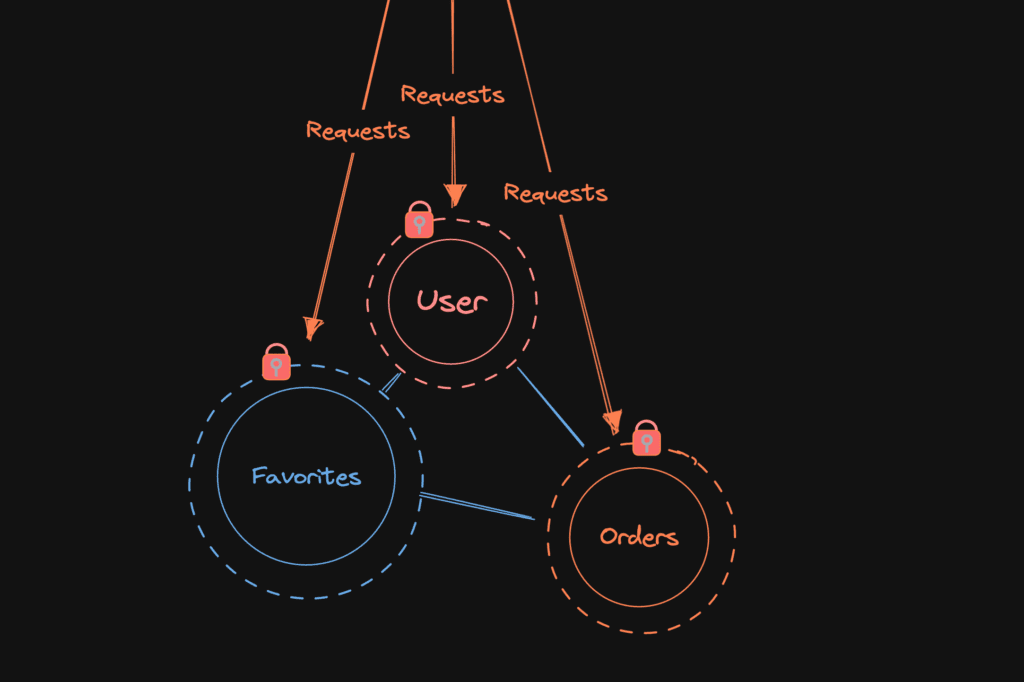

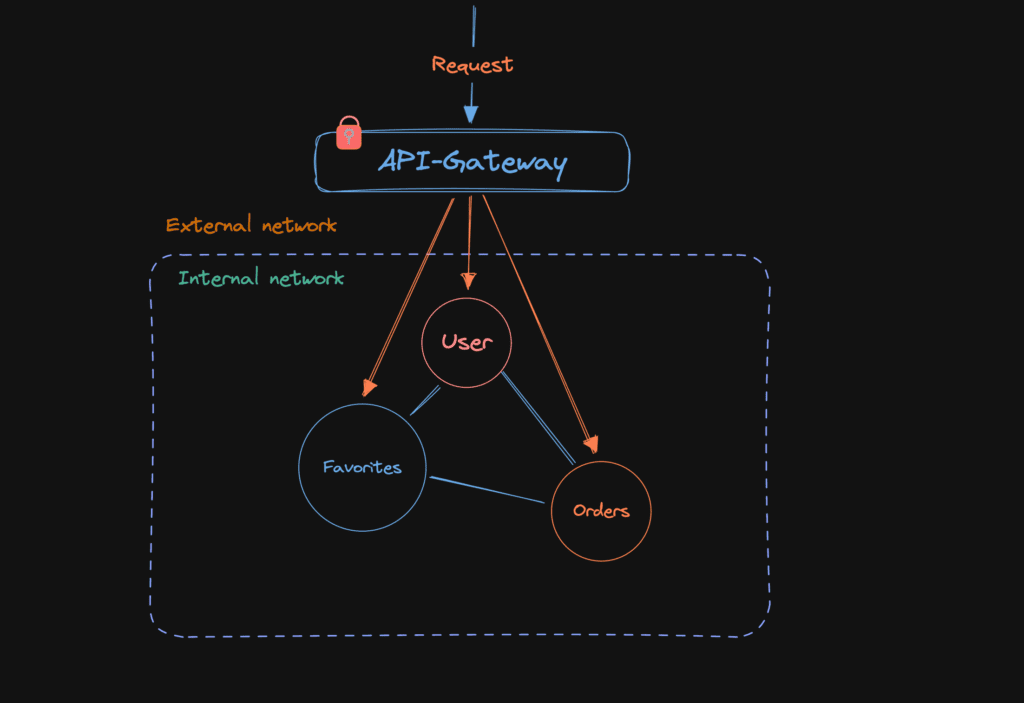

The next piece in the puzzle is the architecture decisions. As implied by the name, these define the rules that specify how a system should be built and what is allowed and what is not. Architecture decisions create the boundaries within which the development team must work and make their decisions related to the development work. A simple example would be to restrict direct access to databases for different services and allow access only through an API. As we are embracing the change, these rules will be challenged, and careful consideration of trade-offs should always be conducted when discussing changes to the architectural decisions.

The final dimension in software architecture is design principles. These can be thought of as guidelines for the development work and not as set rules that must be followed. For example, if setting an architecture decision cannot cover all cases and conditions, a design principle can be set to provide a preferred method of doing things and give the final decision to the developer for the specific circumstance at hand.

Why is software architecture important?

It depends. Everything in architecture is a trade-off, which is why the answer to every architecture question ever is “it depends.” You cannot (or well, should not) search Google for an answer to whether microservices is the right architecture style for your project, because it does depend. It depends on the initial plans you have for the software, what the business drivers are, what the allocated budget is, what the deadline is, where the software will be deployed, skills of the developers, and many many other factors. The case for every single architecture case is different and each faces their own unique problems. That’s why architecture is so difficult. Each of these factors need to be taken into consideration when tackling an architecture case and for every solution, trade-offs need to be analyzed to find the best solution for your specific case.

Architects generally collaborate on defining the domain or business requirements with domain experts, but one of the most important result is defining the things the software must do, that are not directly linked to functionality of the software. The parts we earlier mentioned as architecture characteristics. For example, availability, consistency, scalability, performance, security etc.

It is generally not wise to try to maximize every single architectural characteristic in the design of a system, but to identify and focus on the key aspects. Usually, software applications can focus only on a few characteristics, as they often have impact on other aspects. For example, an increase in consistency, could cause decrease in availability; In some cases, it can be more important to show accurate data instead of inaccurate, even if it means parts of the application would not be available. In addition, each characteristic to be focused on increases the complexity of the overall design, which forces trade-offs to be made between different aspects.

Architectural planning is all about the trade-offs in different approaches. To determine which are the optimal solutions for the case at hand and understanding why applications should be built in a specific way and what it means, is what makes software architecture so valuable.

Types of architecture

When designing software, there is a plethora of architectural patterns available, each with its own strengths and weaknesses. Some of the most common types include:

Monolithic Architecture: A single, unified codebase where all components are tightly integrated. It’s simpler to develop initially but can become a burden as the software scales. While it offers straightforward development and deployment, maintenance can be challenging when the application grows, as even minor changes may require rebuilding and redeploying the entire system.

Microservices Architecture: This divides the software into small, independent services that communicate with each other. Each service handles a specific function and can be developed, deployed, and scaled independently. This architecture is great for scalability and flexibility but requires careful management of communication between services and adds complexity as the system grows.

Layered Architecture: A traditional approach where the system is divided into layers, such as presentation, business logic, and data access. Each layer handles specific tasks, making it easier to manage and test. However, it may become rigid and harder to adapt for complex or rapidly evolving systems.

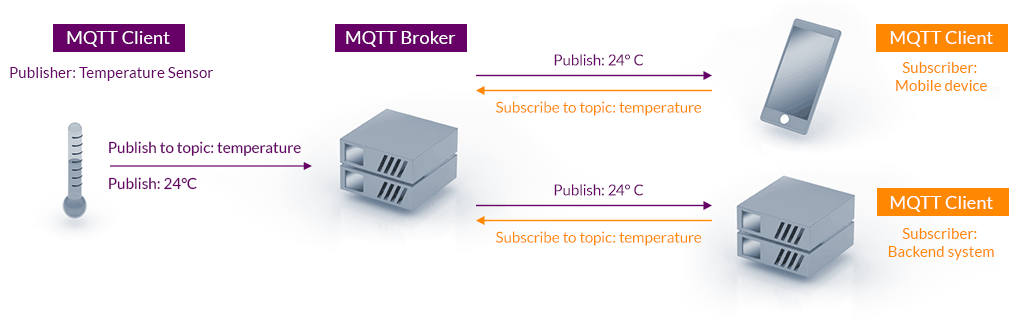

Event-Driven Architecture: Focused on responding to events (e.g., user actions, data updates), this pattern is useful for systems that need to be highly responsive. It enables real-time processing and scalability but requires careful design of event flows to avoid bottlenecks or data inconsistencies.

Serverless Architecture: A cloud-based approach where the application’s backend runs on-demand without needing dedicated servers. It reduces operational costs and simplifies scaling but is highly dependent on third-party cloud providers, which could lead to vendor lock-in.

Each type of architecture comes with trade-offs, and the best choice depends on your business drivers, development team, deadlines, budget and many other factors. For example, startups might opt for monolithic or serverless architectures for faster time-to-market, while large enterprises may prefer microservices to handle complex, large-scale systems.

Possibilities and challenges

Possibilities

A well-planned software architecture opens up numerous opportunities for your business:

- Customizable Solutions: Tailor the software to meet unique needs and adapt to changing demands. For example, modular architecture allows specific features to be added or upgraded without affecting the entire system.

- Faster Market Entry: Modular architectures, like microservices, enable faster deployment cycles and iterative improvements. This can help businesses roll out features quickly to stay ahead of competitors.

- Integration: Seamlessly connect with other tools, platforms, and APIs. This is especially important for businesses looking to integrate with third-party services, such as payment gateways, CRM systems, or analytics platforms.

- Innovation: With the right architecture, your business can experiment with emerging technologies like artificial intelligence, integrating them into existing systems with minimal disruption.

- Team Expertise: With well-planned architecture that fits the skills of the development team, development work, onboarding and delivering a quality product becomes easier.

Challenges

However, there are also challenges to consider:

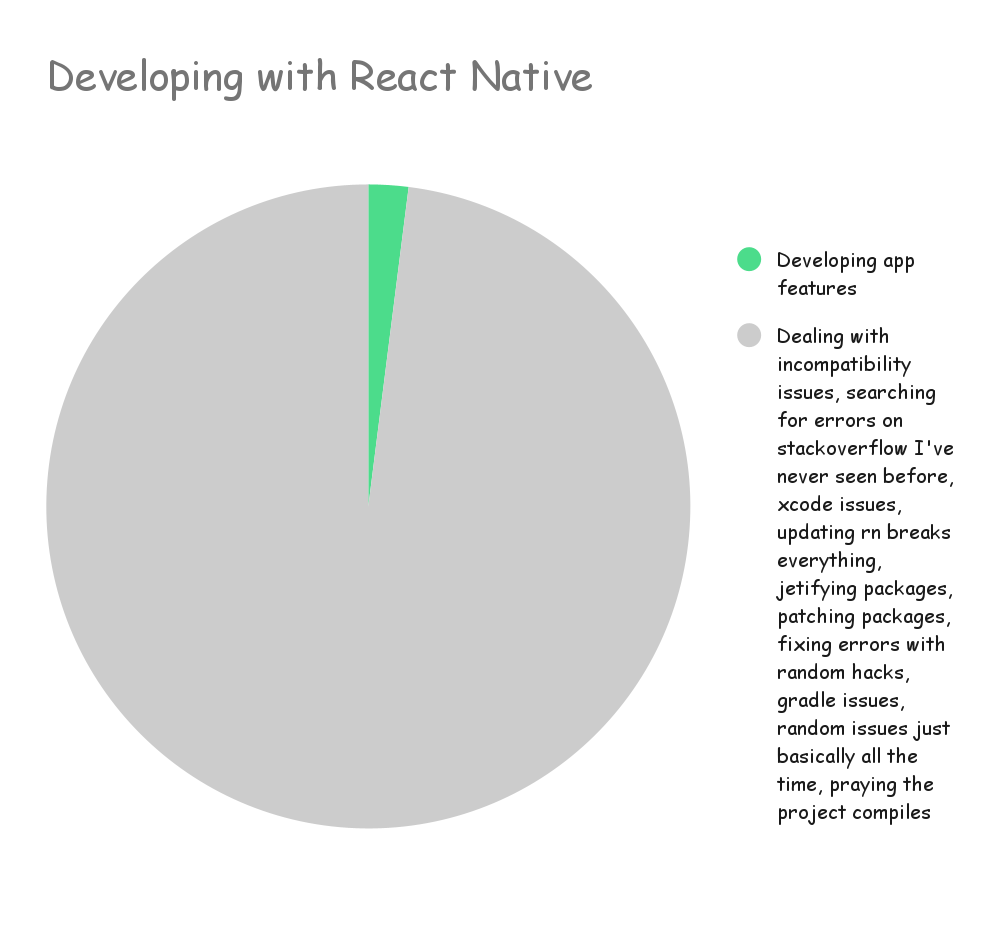

- Initial Complexity: Designing well-thought-out architecture requires expertise and time. Teams need to anticipate future needs while ensuring current requirements are met, which can be difficult without experience.

- Cost: The upfront investment in architecture planning can be significant, though it’s often worth it in the long run. Costs include hiring experienced architects, acquiring tools, and potential delays in the project timeline during the planning phase.

- Evolving Technologies: Staying up to date with new tools and frameworks can be overwhelming. The technology landscape changes rapidly, and businesses must ensure that their chosen architecture doesn’t become obsolete or incompatible with future advancements.

- Team Expertise: Implementing complex architectures like microservices or event-driven systems may require specialized skills. Without proper training or hiring, teams may struggle to deliver a high-quality product.

By working with experienced developers or consultants and regularly reviewing architectural decisions, these challenges can be mitigated. The right approach ensures your software remains robust, adaptable, and aligned with your long-term goals.

Summary

Software architecture is often referred to as the blueprint of the system or as the roadmap for developing a system. Often the answer lies within these definitions, but it is necessary to understand what the blueprint of the roadmap actually contains and why the decisions have been made. The why is more important than how.

While precisely defining software architecture is difficult, it can be seen as a combination of four factors: the structure of a system, architecture characteristics, architecture decisions and design principles.

In the end architectural planning is all about the trade-offs in different approaches. To determine which are the optimal solutions for the case at hand and understanding why applications should be built in a specific way and what it means, is what makes software architecture so valuable.

Read also: Systems thinking in software development – mastering complex systems